Online Firmware Upgrades (OLFU)

Online Firmware Upgrades means that the storage controllers can be updated with minimum disruption to data being transferred by using NPVI as discussed in 3PAR StoreServ 7000 Software – Part 1

Call me old school, but I’m still not 100% comfortable performing firmware upgrades on SAN’s live. However, I do appreciate that some businesses such as cloud providers have no other alternative.

3PAR introduced a new way of upgrading from 3.1.1 to 3.1.2 which is as follows:

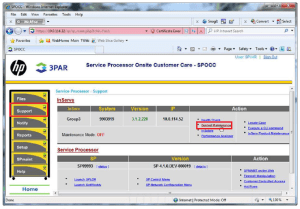

1. Firmware is loaded into the Service Processor

2. Service Processor copies the new code onto all nodes

3. Each node is updated one by one

4. Each node continues to run the old firmware until every node has been upgraded

5. A copy of the old firmware is kept in the ‘altroot’ directory

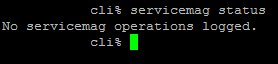

The upgrade process has a timeout value of 10 minutes, a few commands to have in your upgrade toolbox are:

- upgradesys -status

- checkhealth

- checkupgrade

Common reasons for a failed upgrade are:

- Disks may have degraded or failed.

- LUNs may not have Native and Guest ports configured correctly and therefore would not be able to connect on the alternative node.

If everything has gone wrong you can revoke the upgrade by issuing the command upgradesys -revertnode

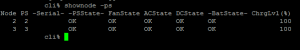

Node Shutdown Active Tasks

3PAR OS 3.1.1 would not allow you to shut down a node with active tasks, which I think is a thing of beauty. However with 3PAR OS 3.1.2 when you issue the command

shutdownnode reboot 0

you will be prompted asking if you are really sure you want to do this? If you answer yes then any active tasks are stopped and the node is rebooted. I haven’t been able to test this yet, however my understanding is that some tasks will automatically resume after the node has been rebooted.

Delayed Export After Reboot

This is actually pretty handy especially if you need to make sure that certain LUN’s are presented in a particular order after a power outage.

I haven’t been able to think of a particular application that would require this feature, never the less, it’s still handy to know it can be used.

Online Copy

Online Copy is 3PAR’s term for making a clone of a virtual volume. It allows for backup products to directly make snapshots of virtual volumes reducing the impact of backups on VM’s. Perhaps more importantly it allows for entire VM’s to be recovered more quickly rather than relying on instant restore mechanisms which run the backup from the backup target which ultimately results in performance degradation. Veeam have announced support for this integration for Q1 2013

An enhancement with 3PAR OS 3.1.2 is that you no longer have to wait for the copy to complete before you can gain access to the copied volume.

So how does this work?

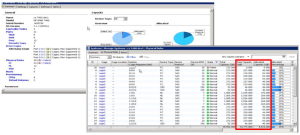

Step 1 – A read only and read write snapshot of the source volume is created. A copy volume is then created which could be on lower tier of disks.

Step 2 – A logical disk volume (LDV) is created to map the copy volume to. Next a thin provisioned virtual volume is created along with another LDV.

Step 3 – Region moves then take place from one LDV to another.

Step 4 – The copy volume can now be exported whilst the region moves continue in the background (that is pretty awesome).

Step 5 – Once the region moves complete the read only and read write snapshots are removed as well as the LDV’s. Then last of all the thin provisioned volumes are removed.

3PAR believe that online copies are faster as they use the ‘region mover’, however no performance figures have been released to substantiate this. A few things to note:

- Online copies need a starting and end point and therefore it has to have an interruption in I/O

- Online copies cannot be paused only cancelled

- Online copy does not support snapshot copying

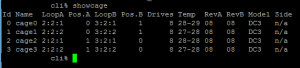

- Online copy can only be performed via CLI