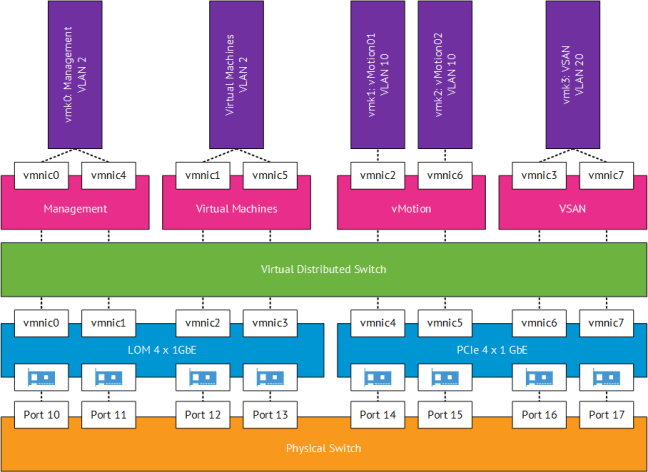

It’s time to shake things up a little and introduce VSAN into the VMFocus.com lab. So before we go any further a logical diagram of the configuration.

Hardware

ESXi Hosts

As menitioned in the VMFocus.com lab I have 3 x HP DL380 G6, each with the following specification:

- 2 x Intel Xeon L5520 Quad Core 2.26GHz giving a total of 16 Hyper Threaded Cores

- 56GB RAM

- 8 x 1GB NIC’s (2 x Dual Port Built in, 1 x Quad Port,)

- 1 x P410 Smart Array 6Gb/s

- 1 x Samsung EVO 250GB 2.5″ SSD 6Gb/s

- 1 x Hitachi Travelstar 7.2K 1TB 2.5″ SATA 6Gb/s

- 2 x HP 72GB 15K SAS HDD

- One Host has an extra 2 x 300GB 10K 2.5″ HDD

- 2 x PSU

- 1 x iLO

- 1 x 8GB SD Card

Networking

- 1 x HP v1910 24G Layer 2 switch with static routing

Configuration

ESXi Host

- Boot

- The plan is to boot ESXi from internal SD card

- Management Network

- vDS with a Port Group consisting of two active 1Gbps interfaces and providing a resilient VMkernel Management network.

- VSAN Network

- vDS with a Port Group consisting of two active 1 Gbps interfaces providing an resilient VSAN network.

- vMotion Network

- vDS with a Port Group consisting of two active 1 Gbps interfaces and will provide a Multi-NIC vMotion network.

- Virtual Machine Network

- vDS with a Port Group consisting of two active 1 Gbps interfaces and will provide an active active Virtual Machine network

- Backups

- Veeam 8 will be used as this is compatible with VSAN. The ESXi Host which has the 2 x 300GB configured in RAID 1 will be used as the backup repository

VSAN

Initial Deployment

You get a bit of a chicken and egg scenario with VSAN as you need to have vCenter available for your VSAN Cluster to be created. My plan is to use the 2 x HP 72GB 15K SAS HDD in RAID 1 which will provide storage capacity and performance for Active Directory, vCenter and SQL before the VSAN cluster is formed. They can also then be used to place ISO’s on in the future.

Disk Group

This is going to be a simple configuration as I’m limited to one disk group only having one SSD in each ESXi Host.

Storage Policy

Again, this is going to be straight forward as it’s my home lab I want to have a mixture between capacity and performance, so I will use the default setting which is ‘number of failures to tolerate = 1’

Network

This could be a blog post in itself! VSAN has a number of requirements which have to be met, these are:

- VSAN does not support multiple VSAN VMkernel interfaces on the same subnet for load balancing

- VSAN does support IP Hash Load Balancing but if it is the only type of traffic on a 1Gb network, then you are unlikely to receive any benefits against using Route Based on Originating Port ID with Explicit Failover

- VSAN does support multiple VSAN VMkernel interfaces on different subnets for load balancing

- As I haven’t deployed VSAN yet, I’m not sure if that applies to different disk groups e.g. 1 x Disk Group in 192.168.1.x/24 and another 1 x Disk Group in 192.168.2.x/24. Something which I will have to test.

With the above in mind and the constraints of my network being 1 GbE with 8 physical NIC’s I decided to go with a simple configuration using Load Based Teaming with resilience at the pNIC level (switch is a single point of failure) based on the following:

- 2 x 1 GbE physical NIC’s per traffic type providing a simple configuration for troubleshooting (in case I encounter any issues)

- A single Port Group with NIOC could be leveraged across the 8 x 1GbE physical NIC’s. However shares would need to be configured and VSAN traffic would only be entitled to 572 Mbps in periods of congestion (think vMotion).

- High – 4 Shares – 572 Mbps for VSAN traffic

- Normal – 2 Shares – vMotion/Virtual Machine traffic 286 Mbps

- Low – 1 Share – Management traffic – 143 Mpbs

- NIOC on a 1GbE network is not supported

- NIC teaming is used for availability not bandwidth aggregation

- Route based on Port ID would be active / standby with Explicit Failover order

- Route based on IP Hash would be active / active but unlikely to use extra bandwidth as source and destination will remain constant in the VSAN intra-cluster communication

- Route based on physical NIC load (Load Based Teaming) provides an active / active configuration

A picture speaks a thousand words, so the proposed network logical diagram is shown below.

Nice post mate… I am going to play around with VSA in my lab… At work we use EMC so I don’t have much HP time 😉

Hi,

I have a similar configuration on our DL380 G6 (Dual sixcore, 32GB Mem).

We also use Hitachi Travelstar 1TB HDD, but we face 78% Fan Performance.

What is even worse, the P400 reports the Disks in OVERHEATING status. And the data throughput to the disks is pretty poor. Could you obser

Hi Thomas, thanks for getting in touch.

The overheating issue can be caused by a number of factors. I would recommend reseating the internal components, checking airflow and changing your BIOS > Advanced Options > Thermal Configuration > Optimal Cooling. Next I would upgrade firmware to see if that resolves the issues.

The P400 isn’t really designed for the HP DL380 G6, I experience the same problems. Can you obtain an P410i? You will get much better performance with this.

Best of luck.