It’s been a while since I wrote a blog post on the Azure updates which I have paid attention to in 2019. So strap yourself in, lets see which ones make the list!

Azure Spot Virtual Machines

Need to run some VM’s for a short period of time to undertake some testing or development work? Well look no further than Azure Spot Virtual Machines, which Microsoft use to sell unused capacity. Then when they need it back they shut the VMs down.

More information can be found here.

Azure Migrate – Agentless Dependency Mapping

Working out a migration to Azure for IaaS can be a bit tricky when a customer doesn’t have or know what makes up an application service. Step in Azure Migrate – Agentless Dependency Mapping, which can discover interdepentent systems that need to be migrated together.

Coupled with this Azure Migrate can now perform captivation discovery using WMI and SSH calls to to determine the apps, roles and features installed.

More information can be found here.

Azure Dedicated Hosts

It’s not often in this day and age that a dedicated host is required in the public cloud, however Microsoft now have the answer for this with the general availability of ‘Dedicated Hosts’, which provide a hardware isolated environment.

More information can be found here.

Azure Cost Management – CSP

It was always a tough call for customers to choose between the commercial attractiveness of a CSP or the human readable information provided using an EA.

On 1st November, Microsoft enabled CSP for Azure Cost Management, meaning customer now have end to end visbility of costs and budgets.

More information can be found here.

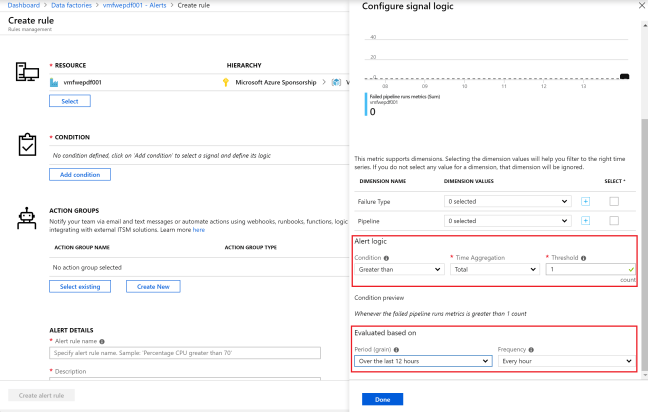

Azure Monitor

This particular service in Azure, has had oodles of updates, which now enables it to provide a centralised repository for collecting, analysing and altering. To be fair, I have used it extensively troubleshooting issues in particular when tuning Application Gateway with WAF functionality.

More information can be found here.

Azure Bastion

Providing secure audited remote access to third parties or employees used to mean deploying Just in Time Access. However this raised a few eyebrows as it was based typically based on source Public IP Address locked down to a specific TCP port.

Azure Bastion removes this risk and provides a PaaS service to enable you to connect over SSL to RDP or SSH sessions.

More information can be found here.