This is the first in a short series of blog post which is aimed at the configuration of an Azure Application Gateways.

Why might you ask am I creating a blog post series? For two reasons, firstly I think that the Application Gateway provides an extra level of protection for internet facing applications and secondly I found the Microsoft documentation lacking in a few areas.

What is an Application Gateway?

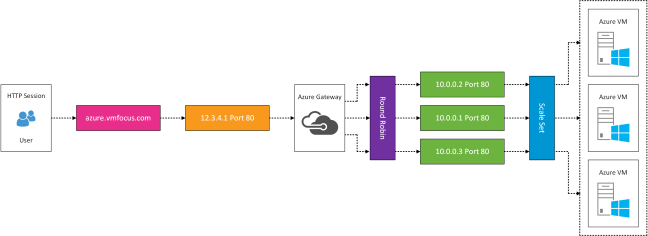

Application Gateways are a dedicated virtual appliance providing application delivery controller services.

Benefits of using Application Gateway are:

- Provides Layer 7 load balancing and routing

- SSL Offload, taking the burden of decrypting traffic from Internet facing servers onto the Application Gateway

- End to End Encryption by terminating SSL connection onto the Application Gateway, applies routing rules and then re-encrypts traffic

- Cookie Based Session Affinity to ensure users are directed back to the same session

- Protects web applications from common attack scenarios such as cross-site scripting, SQL injection and session hijacks using web application firewall capabilities

- Custom health probes enabling specific application paths to be monitored

Drawbacks of using Application Gateway are:

- Increased complexity versus Load Balancers

- Can only be deployed when it is the first resource within a subnet

In this scenario I have AD FS running on Windows 2016 which is running on Microsoft Azure and is integrated with Azure AD via Azure AD Connect. A logical overview of the configuration is shown below.

The plan is to extend this design and include an Application Gateway running Web Application Firewall functionality. A logical configuration of the desired state is shown below.

Before we being, I recommend that you verify your AD FS configuration to make sure it’s functioning correctly. Also you will need your AD FS certificate available in order to undertake the SSL Offload onto the Application Gateway.

Getting Everything Ready

I know you are itching to crack on, but I try and work in a logical order. So first of all make sure you have your subnets defined correctly in Azure. The configuration I’m using is as follows:

VMF-WE-SUB01

172.16.1.0/24 – This subnet is the Trusted Network in the diagram above.

VMF-WE-SUB02

172.16.2.0/24 – This subnet is the DMZ Internal Network in the diagram above.

VMF-WE-SUB03

172.16.3.0/24 – This subnet is the DMZ External Network in the diagram above and will be used for the Application Gateway

Important, the Application Gateway must be the first resource deployed in a newly created subnet

WAP Servers

We need to get the thumbprint for our AD FS Certificate and ensure this is bound correctly. Run the following command to obtain the Certificate Hash and Application ID

netsh http show sslcert

Next we need to run the command on both WAP servers

netsh http add sslcert ipport=0.0.0.0:443 certhash=f2d9bb93d29a2c2c0835f4a4cb2d67d51efc5706 appid={5d89a20c-beab-4389-9447-324788eb944a}

To verify the command has ran correctly, view your SSL Certificates again and you should see IP:Port 0.0.0.0:443 tied to your AD FS Certificate.

Deploy Application Gateway

Within the freshly created VMF-WE-SUB03 we are going to deploy an Application Gateway.

Let’s start by entering the basics, I calling mine an imaginative VMF-WE-AG01. It’s going to be a WAF, so I have selected this. Finally, I will be using an existing Resource Group.

The VNet is VMF-WE-VNET01 and the subnet is VMF-WE-SUB03. We are gong tro create a Static Public IP Address (I’m calling mine VMF-WE-AG01-PIP). Finally we will leave the Listener Configuration as HTTP for now.

That’s the Application Gateway deployment beginning. It’s going to take a while so suggest you make a brew and get yourself ready for the next instalment.

As part of transitioning my lab to Hyper-V I’m using a HPE StoreVirtual VSA to provide shared storage to the Hyper-V Hosts.

As part of transitioning my lab to Hyper-V I’m using a HPE StoreVirtual VSA to provide shared storage to the Hyper-V Hosts.

The ability to dynamically scale to a public cloud was one of the mantra’s I used to hear a couple of years ago. When reality struck and customers realised that there monolithic applications wouldn’t be suitable for this construct they realised they would need to re-architect.

The ability to dynamically scale to a public cloud was one of the mantra’s I used to hear a couple of years ago. When reality struck and customers realised that there monolithic applications wouldn’t be suitable for this construct they realised they would need to re-architect.