We are now ready for Recovery Plans! So the question is what are they? Well a Recovery Plan is what we would like to happen in the event of a DR situation, let me explain what I mean.

Let’s imagine you have two Exchange 2010 servers, one providing the CAS/Hub Transport Role and the other providing the Mailbox role,you would want these to come up in a specific order, the Mailbox first then the CAS/Hub server. That’s all great but I can hear you saying, but what about IP address? That’s going to cause me some proper dramas, in fact what DNS all of the records are going to be wrong!

Well the panic is over with SRM we can address all of these issues! We can:

- Bring virtual machines up in a certain order.

- Change virtual machines IP address

- Run a script or batch file

Pretty cool eh? Right let’s crack on with the configuration.

Let’s select Recovery Plans from the bottom left hand menu and then Create Recovery Plan from the top right Commands box

Select your Recovery Site, in my case DR and click Next

From a design perspective, I would always recommend that you have a Recovery Plan per Protection Group as this gives you a higher level of control to fail over only particular virtual machines. In this case we are going to select PG_SATA_TEST01 and click Next

The next screen, is quite interesting, we can have a ‘test network’ in our DR site which is preconfigured so that rather than SRM creating a network for us, we can have the virtual machines come up in a predefined network when we ‘test DR’. Why would I want to do this? Well it would give you access to the virtual machines in the DR location and you can test connectivity between them.

In this scenario we are going to leave the ‘test network’ setting to Auto and click Next

Next we need to give the Recovery Plan a name, I’m going to be imaginative and call mine RP_SATA_TEST01 in the description I always reference the Protection Group that we are going to perform the recovery on. Then click Next

We then get a summary screen, click Finish to complete.

Awesome we should now have a Recovery Plan we can test, I’m itching to give it a whirl!

Before we do this, let’s take a quick swing by our HP StoreVirtual VSA’s to make sure everything is ‘tickety boo’

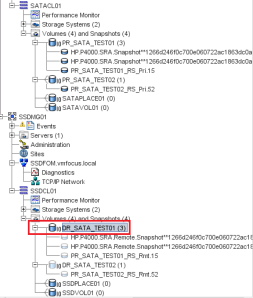

Let’s login to the CMC and open both SATAMG01 and SSDMG01 and expand both clusters. Select PR_SATA_TEST01_RS and make sure the Status (on the right hand side) is ‘normal’

Awesome, let’s give do a Test Recovery!

Select RP_SATA_TEST01 and then the Summary Tab and then click Test

We now get a pop up asking if we want to replicate recent changes or not for the test. If you select yes, SRM will use the SRA to send the commands to the HP StoreVirtual VSA to replicate the Volume PR_SATA_TEST01. I’m going to choose no, as I haven’t actually changed any data (we will do this later). Click Next

We now need to click Start and let the SRM magic happen.

At this point, we want to see what’s going on so let’s jump onto the Recovery Steps Tab and expand all of the stages.

So what’s going on here? Well let’s go threw this step by step

Step 1 SRM will replicate the storage if you have selected this option, we chose not to hence why the status is ‘not applicable’

Step 2 SRM will bring any hosts out of Standby if you are using Distributed Power Management at the DR site

Step 3 SRM will suspend non-critical VM’s at DR site so that the resources are available to be used by the virtual machines we are testing

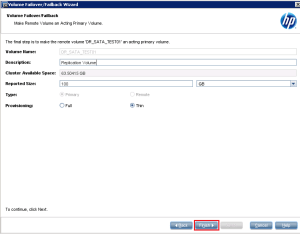

Step 4 This is probably the most important step to understand. SRM doesn’t want to interfere with the replication process, if it did then it would have to make the replicated LUN in this case PR_SATA_TEST01_RS_Rmt.16 Read/Write and we don’t want to do that. So instead SRM uses the SRA to invoke a point in time snapshot of the read only PR_SATA_TEST01_RS_Rmt.16 which it turns into a Read/Write copy so that the virtual machine can be accessed.

I want to show you this from HP StoreVirtual VSA perspective, if you look below our replicated volumes haven’t been touched but we do have a Read/Write copy of PR_SATA_TEST01_RS.Rmt.16 (see it’s dark blue)

Step 5-9 SRM powers on the virtual servers in priority order.

Boom we have test complete!

Let’s nip over to VMF-ADMIN02 which is my DR vCenter and see what’s going down.

Cool, VMF-TEST02 is up and running it’s go the same IP Address and it’s been presented with the snapshot of the read only DR volume PR_SATA_TEST01 and that SRM has put VMF-TEST01 into a srm-recovery-portgroup

Good skills, let’s roll back the Test Back to VMF-ADMIN01 which is Production vCenter and click Cleanup

Essentially, SRM just reverses the process above, if all went well, you should see this

Let’s double check the CMC to make sure everything is back to they way it should be, voilà it is!

If like me you want to see what’s going on in more detail, run the Test again, but this time make sure you go over to VMF-ADMIN02 and slect Tasks & Events at Root level. This will show you everything that SRM does to perform a test failover. Pretty impressive to say the least.

Change IP Address

We probably want to change the IP address details of VMF-TEST01 when it fails over so it’s on the right subnet, using the right default gateway and DNS server. To do this Select the Virtual Machines Tab and Select Configure Recovery

Select IP Settings – NIC 1 and place a Tick in Customize IP settings during recovery and lastly click on Configure Protection and enter your IP details, rinse and repeat this for Configure Recovery

For those of you in the UK, here’s one I made earlier

Hit OK, and perform another Test Recovery, fingers crossed we should see that the IP address changes at the DR site. Time for a quick brew whilst we run the test.

The results are in and we have success!

Let’s roll back and make some more config changes

Registering DNS

My real world experience using SRM is that we need to do more with DNS than just change the IP address, it’s a good idea to update DNS as well. Now I’m not a ‘script guy’ so I use gold old fashioned batch files.

On VMF-TEST01 we are going to create the following batch file:

@echo off

ipconfig /registerdns

exit

The batch file will be called ipconfigupdate.bat and saved on root of the C: Drive on VMF-TEST01

Cool, now let’s configure SRM to register the new DNS details.

Back to the Virtual Machines Tab and Configure Recovery for VMF-TEST01

We are going to select a ‘Post Power On Step’ and then Add

We are going to use ‘Command on Recovered VM’ and give the Step the name ‘Ipconfig Register DNS’ and the content is going to be c:windowssystem32.cmd.exe /c c:ipconfigupdate.bat and the Timeout value is 1 minute

The first part c:windowssystem32.cmd.exe tells SRM where to find the application you want to run in this case it’s Windows Command Prompt and then second part /c c:ipconfigupdate.bat tells SRM to run the batch file under Windows Command Prompt.

OK, now we need to think about how we are going to test this, as if VMF-TEST01 fails over into Auto Network Port Group then it won’t be able to communicate with the Domain Controller in the DR site. So ladies and gentlemen we are going to do what known in the IT world as ‘frig’ to test this.

We are going to shut down VMF-TEST01 at the Production Site and then change the Auto Network to DRLAN, so that when VMF-TEST01 comes up at DR it can communicate with my DC.

If you remember we need to edit the Recovery Plan RP_SATA_TEST01 to change the test Port Group.

Right then let’s run a Test recovery and see if my ‘frig’ works! It might be time for a brew, as when we customize the IP Address, SRM will bring the guest VM online, change the IP Address’s and then shut it down, wait for VMware Tools and then run our batch file.

Awesome, well the Test recovery was a success.

Let’s check VMF-TEST01, well it’s got the right IP Address and the right Port Group. I’m going to attempt a ping, success! I feel like the A-Team when a plan comes together.

TOP TIP: Don’t forget to change your DNS back

Virtual Machine Priory Order

The last item I want to cover off is Virtual Machine Priority Order. We have a range of 1 to 5. Priority 1 VM’s start first and 5 start last. The cool thing about this is that it wait’s for VMware Tools to start before the next VM is powered on.

To configure this we need to go back to the Virtual Machines Tab and Right Click VMF-TEST01 Select Priority and then the level you want.

Boom job done!

That’s it for this post, on the next blog entry we are going to failover, reprotect and failback.