Distributed Power Management is an excellent feature within ESXi5, it’s been around for a while and essentially migrates workloads to fewer hosts to enable the physical servers to be placed into standby mode when they aren’t being utilised.

Finance dudes like it as it saves ‘wonga’ and Marketing dudettes like it as it give ‘green credentials’. Everyone’s a winner!

vCenter utilises IPMI, iLO and WOL to ‘take’ the physical server out of standby mode. vCentre tries to use IPMI first, then iLO and lastly WOL.

I was configuring Distributed Power Management and thought I would see if a ‘how to’ existed and perhaps my ‘Google magic’ was not working, as I couldn’t find a guide on configuring WOL with ESXi5. So here it is, let’s crack on and get it configured.

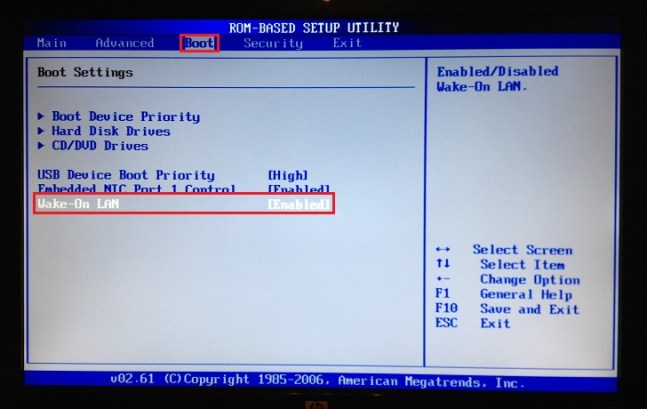

Step 1

First things first, we need to check our BIOS supports WOL and enable it. I use a couple of HP N40L Microservers and the good news is these bad boys do.

Step 2

vCenter uses the vMotion network to send the ‘magic’ WOL packet. So obviously you need to check that vMotion is working. For the purposes of this how to, I’m going to assume you have this nailed.

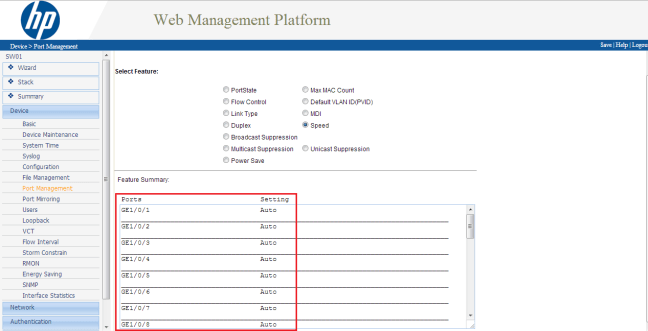

Step 3

Check you switch config. Eh don’t you mean my vSwitch config Craig? Nope I mean your physical switch config. The ports that your vMotion network plugs into need to be set to ‘Auto’ as for WOL to work the ‘magic’ with certain manufacturers this has to go over a 100Mbps network connection.

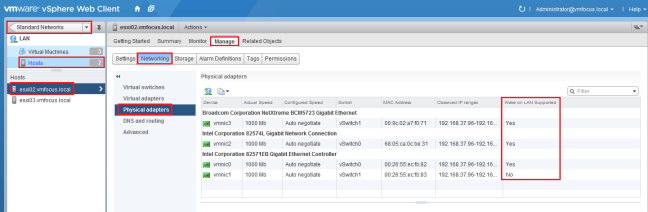

Step 4

Now we have checked our physical environment, let’s check our virtual environment. Go to your ‘physical adapters’ to determine if WOL is supported.

This can be found in the vSphere Web Client (which I’m trying to use more) under Standard Networks > Hosts > ESXi02 > Manage > Networking > Physical Adapters

We can see that every adapter supports WOL except for vmnic1.

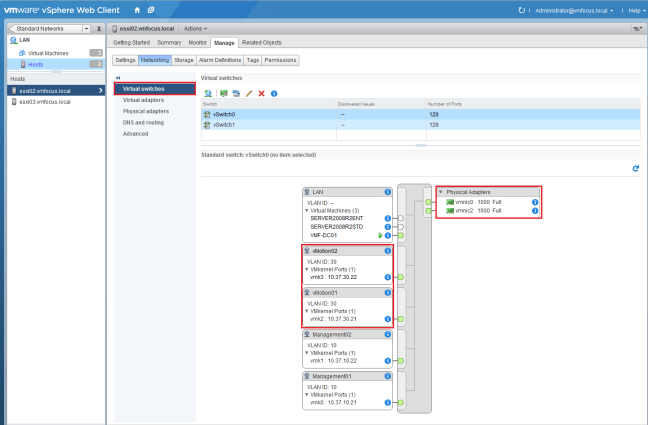

Step 5

So we need to check our vMotion network to ensure that vmnic1 isn’t being used.

Hop up to ‘virtual switches’ and check your config. Good news is I’m using vmnic0 and vmnic2 so we are golden.

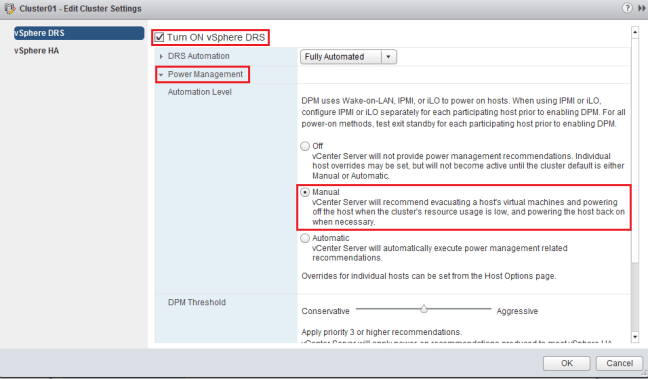

Step 6

Let’s enable Distributed Power Management. Head over to vCenter > Cluster > Manage > vSphere DRS > Edit and place a tick in Turn ON vSphere DRS and select Power Management. But ensure that you set the Automation Level to Manual. We don’t want servers to be powered off which can’t come back on again!

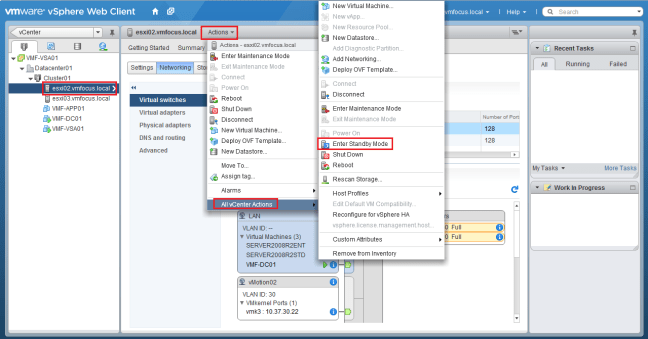

Step 7

Time to test Distributed Power Management! Select your ESXi Host, choose Actions from the middle menu bar and select All vCenter Actions > Enter Standby Mode

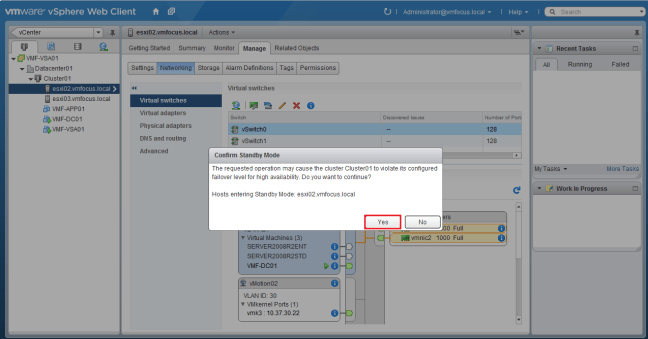

Ah, we have a dialogue box appear saying ‘the requested operation may cause the cluster Cluster01 to violate its configured failover level for high availability. Do you want to continue?’

The man from delmonte he says ‘yes’ we want to continue! The reason for the message is my HA Admission Control is set to 50%, so invoking a Host shut down is violating this setting.

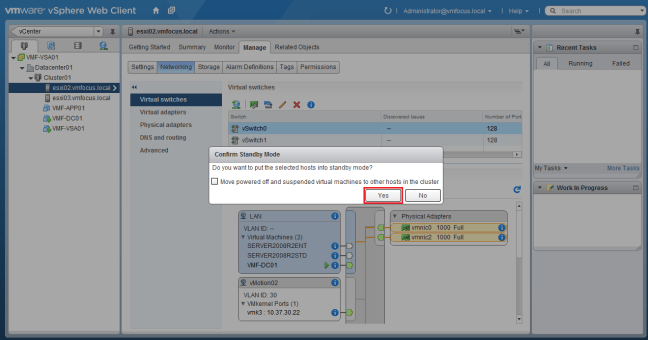

vCenter is rather cautious and quite rightly so. Now it’s asking if we want to ‘move powered off and suspended virtual machines to other hosts in the cluster’. I’m not going to place a tick in the box and will select Yes.

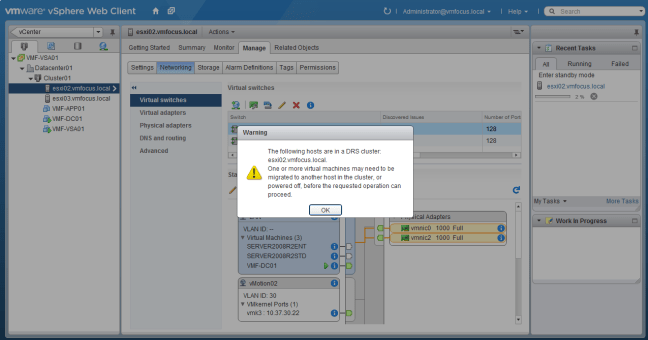

We have a Warning ‘one or more virtual machines may beed to be migrated to another host in the cluster, or powered off, before the requested operation can proceed’. This makes perfect sense as we are invoking DPM, we need to migrate any VM’s onto another host.

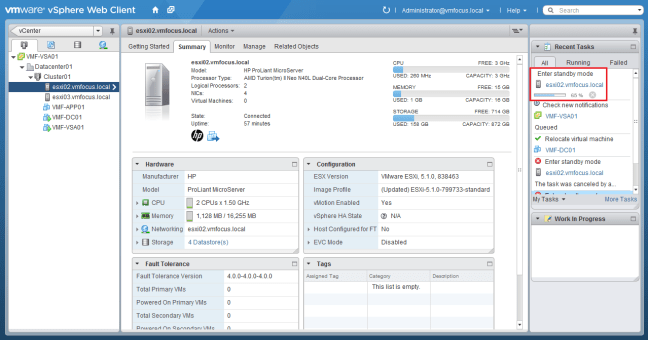

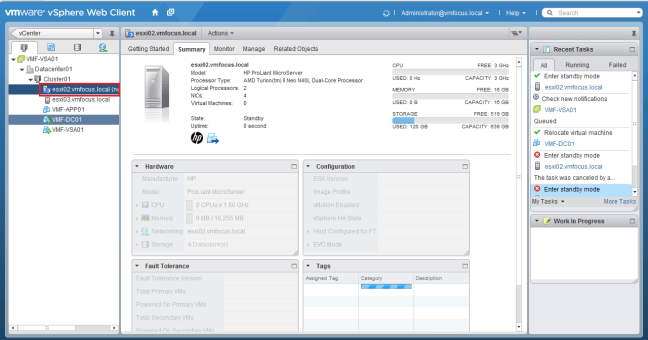

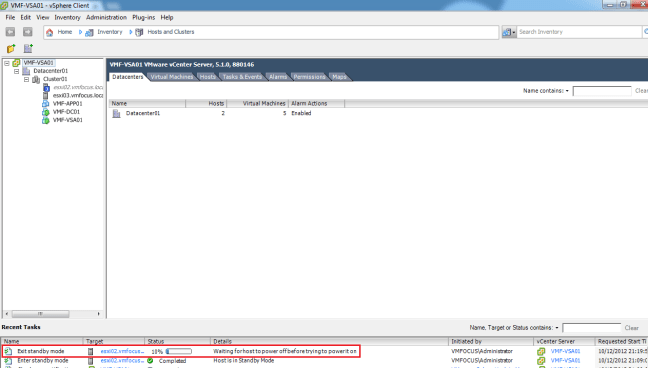

A quick vMotion later, and we can now see that ESXi02 is entering Standby Mode

You might as well go make a cup of tea as it takes the vSphere Client an absolute age to figure out the host is in Standby Mode.

Step 8

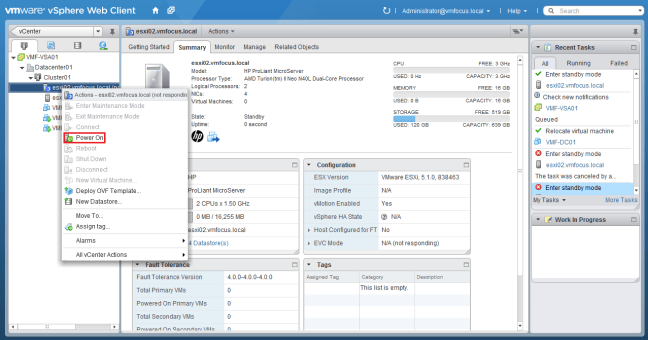

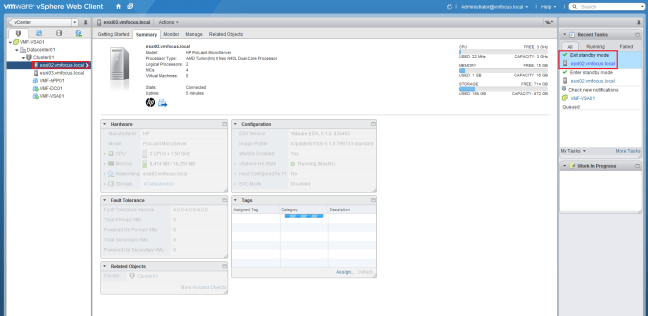

Let’s power the host back up again. Right Click your Host and Select Power On

Interestingly, we see the power on task running in the vSphere Web Client, however if you jump into the vSphere Client and check the recent tasks pane, you see that it mentions ‘waiting for host to power off before trying to power it on’

This had me puzzled for a minute and then I heard my HP N40L Microserver boot and all was good with the world. So ignore this piece of information from vCenter.

Step 9

Boom our ESXi Host is back from Standby Mode

Rinse and repeat for your other ESXi Hosts and then set Distributed Power Management to Automated and you are good to go.