So you have put together an epic vSphere Metro Storage Cluster using your HP StoreVirtual SAN (formerly Lefthand) using the following rules:

- Creating volumes for each site to access it’s datastore locally rather than going across the inter site link

- Creating DRS ‘host should’ rules so that VM run on the ESXi Hosts local to the volumes and datastores they are accessing.

The gotcha occurs when you have a either a StoreVirtual Node failure or a StoreVirtual Node is rebooted for maintenance, let me explain why.

In this example we have a Management Group called SSDMG01 which contains:

- SSDVSA01 which is in Site 1

- SSDVSA02 which is in Site 2

- SSDFOM which is in a Site 3

We have a single volume called SSDVOL01 which is located at Site 1

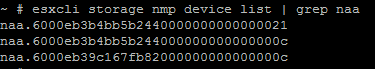

StoreVirtual uses a ‘Virtual IP’ Address to ensure fault tolerance for iSCSI access, you can view this under your Cluster then iSCSI within the Centralized Management Console. In my case it’s 10.37.10.2

Even though iSCSI connections are made via the Virtual IP Address, each Volume goes via a ‘Gateway Connection’ which is essentially just one of the StoreVirtual Nodes. To check which gateway your ESXi Hosts are using to access the volumes, select your volume and then choose iSCSI Sessions.

In my case the ESXi Hosts are using SSDVSA01 to access the volume SSDVOL01 which is correct as they are at Site 1.

Let’s quickly introduce a secondary a second Volume called SSDVOL02 and we want this to be in Site 1 as well. Let’s take a look at the iSCSI sessions for SSDVOL02

Crap, they are going via SSDVSA02 which is at the other site, causing latency issues. Can I do anything about this in the CMC? Not that I can find.

HP StoreVirtual is actually very clever, what it has done is load balance the iSCSI connections for the volumes across both nodes in case of a node failure. In this case SSDVOL01 via SSDVSA01 and SSDVOL02 via SSDVSA02. If you have ever experienced a StoreVirtual node failure you know that it takes around 5 seconds for the iSCSI sessions to be remapped, leaving your VM’s without access to there HDD for this time.

What can you do about this? Well when creating your volumes make sure you do them in the order for site affinity to the ESXi Hosts, we know that the HP StoreVirtual just round robins the Gateway Connection.

That’s all very well and good, what happens when I have a site failure, let’s go over this now. I’m going to pull the power from SSDVSA01 which is the Gateway Connection for SSDVOL01. It actually has a number of VM’s running on it.

Man down! As you can see we have a critical event against SSDVSA01 and the volume SSDVOL01 status is ‘data protection degraded.

Let’s take a quick look at the iSCSI sessions for SSDVOL01, they should be using the Gateway Connection SSDVSA02

Yep all good, it’s what we expected. Now let’s power SSDVSA01 back up again and see what happens. You will notice that the HP StoreVirtual re syncs the volume between the Nodes and then it’s shown as Status: Normal.

Here’s the gotcha, the iSCSI sessions will continue to use SSDVSA02 in Site 2 even though SSDVSA01 is back online at Site 1.

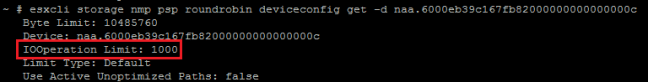

After around five minutes StoreVirtual will automatically rebalance the iSCSI Gateway Connections. Great you say, ah but we have a gotcha. As SSDVOL02 has now been online the longest, StoreVirtual will use SSDVSA01 as the gateway connection meaning we are going across the intersite link. So to surmise our current situation:

- SSDVOL01 using Site2 SSDVSA01 as it’s Gateway Connection

- SSDVOL02 using Site1 SSDVSA02 as it’s Gateway Connection

Not really the position we want to be in!

We can get down and dirty using the CLIQ to manually rebalance the SSDVOL01 onto SSDVSA01 perhaps? Let’s give it a whirl shall we.

Login to your VIP address using SSH but with the Port 16022 and enter your credentials.

Then we need to run the command ‘rebalanceVIP volumeName=SSDVOL01’

If your quick and flick over to the CMC you will see the Gateway Connection status as ‘failed’ this is correct don’t panic.

Do we have SSDVOL01 using SSDVSA01? Nah!

The only way to resolve this is to either Storage vMotion your VM’s onto a volume with enough capacity at the correct site or reboot your StoreVirtual Node in Site 2.

In summary, even though HP StoreVirtual uses a Virtual IP Address this is tied to a Gateway Connection via a StoreVirtual Node, you are unable to change the iSCSI connections manually without rebooting the StoreVirtual Nodes.

Hopefully, HP might fix this with the release of LeftHand OS10.1